Back شبكة عصبية ذات تغذية أمامية Arabic Xarxa neuronal directa Catalan Dopředná neuronová síť Czech Red neuronal prealimentada Spanish شبکه عصبی پیشخور Persian Réseau de neurones à action directe French רשת זרימה קדימה HE Rete neurale feed-forward Italian 순방향 신경망 Korean Jednokierunkowa sieć neuronowa Polish

This article needs additional citations for verification. (September 2011) |

| Part of a series on |

| Machine learning and data mining |

|---|

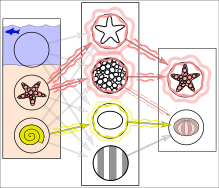

Simplified example of training a neural network in object detection: The network is trained by multiple images that are known to depict starfish and sea urchins, which are correlated with "nodes" that represent visual features. The starfish match with a ringed texture and a star outline, whereas most sea urchins match with a striped texture and oval shape. However, the instance of a ring textured sea urchin creates a weakly weighted association between them.

Subsequent run of the network on an input image (left):[1] The network correctly detects the starfish. However, the weakly weighted association between ringed texture and sea urchin also confers a weak signal to the latter from one of two intermediate nodes. In addition, a shell that was not included in the training gives a weak signal for the oval shape, also resulting in a weak signal for the sea urchin output. These weak signals may result in a false positive result for sea urchin.

In reality, textures and outlines would not be represented by single nodes, but rather by associated weight patterns of multiple nodes.

In reality, textures and outlines would not be represented by single nodes, but rather by associated weight patterns of multiple nodes.

A feedforward neural network (FNN) is one of the two broad types of artificial neural network, characterized by direction of the flow of information between its layers.[2] Its flow is uni-directional, meaning that the information in the model flows in only one direction—forward—from the input nodes, through the hidden nodes (if any) and to the output nodes, without any cycles or loops,[2] in contrast to recurrent neural networks,[3] which have a bi-directional flow. Modern feedforward networks are trained using the backpropagation method[4][5][6][7][8] and are colloquially referred to as the "vanilla" neural networks.[9]

- ^ Ferrie, C., & Kaiser, S. (2019). Neural Networks for Babies. Sourcebooks. ISBN 1492671207.

{{cite book}}: CS1 maint: multiple names: authors list (link) - ^ a b Zell, Andreas (1994). Simulation Neuronaler Netze [Simulation of Neural Networks] (in German) (1st ed.). Addison-Wesley. p. 73. ISBN 3-89319-554-8.

- ^ Schmidhuber, Jürgen (2015-01-01). "Deep learning in neural networks: An overview". Neural Networks. 61: 85–117. arXiv:1404.7828. doi:10.1016/j.neunet.2014.09.003. ISSN 0893-6080. PMID 25462637. S2CID 11715509.

- ^ Cite error: The named reference

lin1970was invoked but never defined (see the help page). - ^ Cite error: The named reference

kelley1960was invoked but never defined (see the help page). - ^ Rosenblatt, Frank. x. Principles of Neurodynamics: Perceptrons and the Theory of Brain Mechanisms. Spartan Books, Washington DC, 1961

- ^ Cite error: The named reference

werbos1982was invoked but never defined (see the help page). - ^ Rumelhart, David E., Geoffrey E. Hinton, and R. J. Williams. "Learning Internal Representations by Error Propagation". David E. Rumelhart, James L. McClelland, and the PDP research group. (editors), Parallel distributed processing: Explorations in the microstructure of cognition, Volume 1: Foundation. MIT Press, 1986.

- ^ Hastie, Trevor. Tibshirani, Robert. Friedman, Jerome. The Elements of Statistical Learning: Data Mining, Inference, and Prediction. Springer, New York, NY, 2009.

© MMXXIII Rich X Search. We shall prevail. All rights reserved. Rich X Search